Popular searches

- How to Get Participants For Your Study

- How to Do Segmentation?

- Conjoint Preference Share Simulator

- MaxDiff Analysis

- Likert Scales

- Reliability & Validity

Request consultation

Do you need support in running a pricing or product study? We can help you with agile consumer research and conjoint analysis.

Looking for an online survey platform?

Conjointly offers a great survey tool with multiple question types, randomisation blocks, and multilingual support. The Basic tier is always free.

Research Methods Knowledge Base

- Navigating the Knowledge Base

- Foundations

- Measurement

- Research Design

- Conclusion Validity

- Data Preparation

- Descriptive Statistics

- Dummy Variables

- General Linear Model

- Posttest-Only Analysis

- Factorial Design Analysis

- Randomized Block Analysis

- Analysis of Covariance

- Nonequivalent Groups Analysis

- Regression-Discontinuity Analysis

- Regression Point Displacement

- Table of Contents

Fully-functional online survey tool with various question types, logic, randomisation, and reporting for unlimited number of surveys.

Completely free for academics and students .

The t-test assesses whether the means of two groups are statistically different from each other. This analysis is appropriate whenever you want to compare the means of two groups, and especially appropriate as the analysis for the posttest-only two-group randomized experimental design .

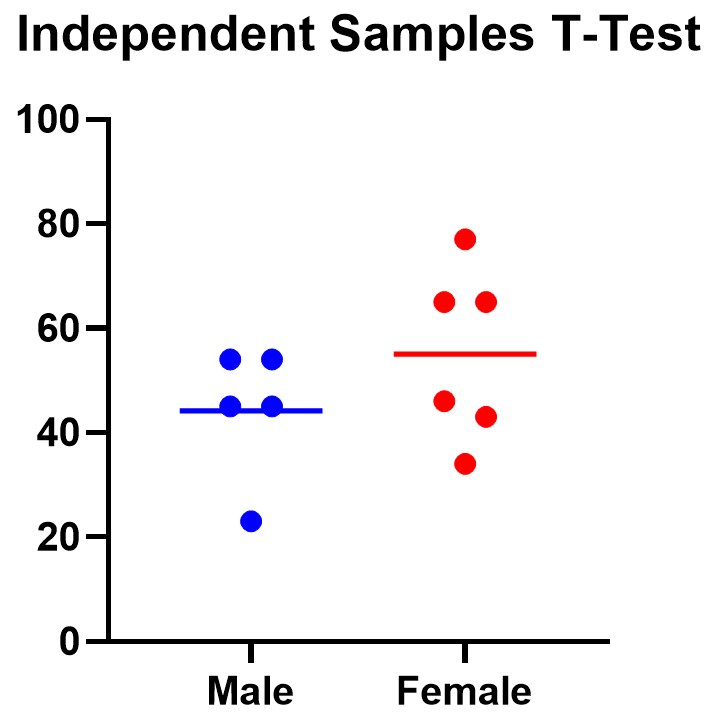

Figure 1 shows the distributions for the treated (blue) and control (green) groups in a study. Actually, the figure shows the idealized distribution – the actual distribution would usually be depicted with a histogram or bar graph . The figure indicates where the control and treatment group means are located. The question the t-test addresses is whether the means are statistically different.

What does it mean to say that the averages for two groups are statistically different? Consider the three situations shown in Figure 2. The first thing to notice about the three situations is that the difference between the means is the same in all three . But, you should also notice that the three situations don’t look the same – they tell very different stories. The top example shows a case with moderate variability of scores within each group. The second situation shows the high variability case. the third shows the case with low variability. Clearly, we would conclude that the two groups appear most different or distinct in the bottom or low-variability case. Why? Because there is relatively little overlap between the two bell-shaped curves. In the high variability case, the group difference appears least striking because the two bell-shaped distributions overlap so much.

This leads us to a very important conclusion: when we are looking at the differences between scores for two groups, we have to judge the difference between their means relative to the spread or variability of their scores. The t-test does just this.

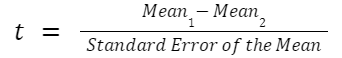

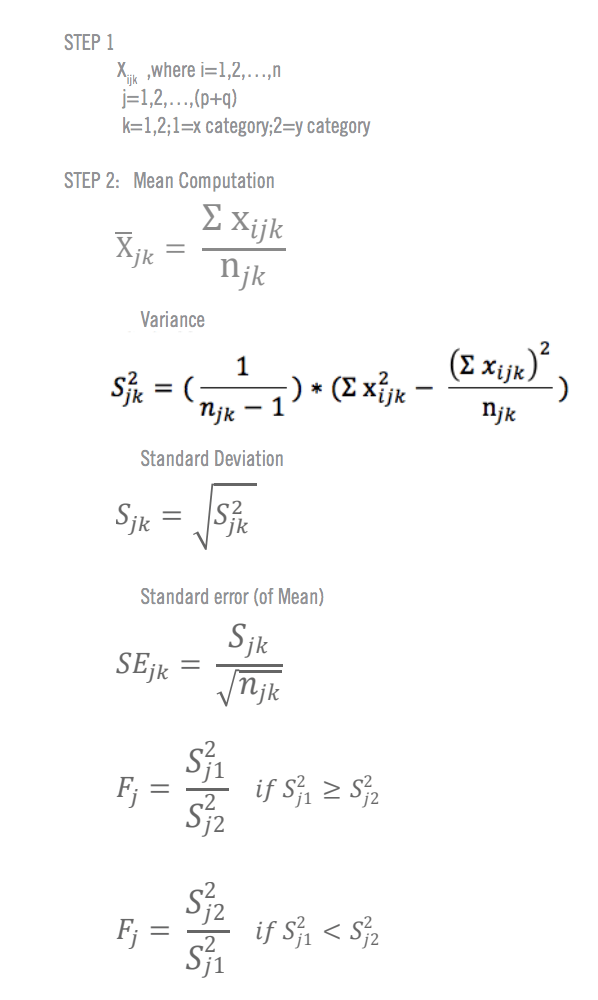

Statistical Analysis of the t-test

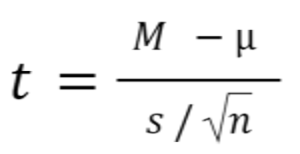

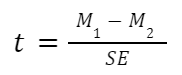

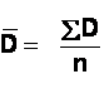

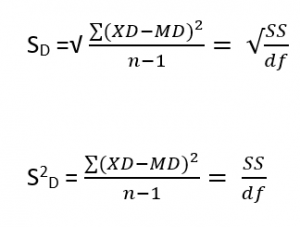

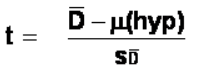

The formula for the t-test is a ratio. The top part of the ratio is just the difference between the two means or averages. The bottom part is a measure of the variability or dispersion of the scores. This formula is essentially another example of the signal-to-noise metaphor in research: the difference between the means is the signal that, in this case, we think our program or treatment introduced into the data; the bottom part of the formula is a measure of variability that is essentially noise that may make it harder to see the group difference. Figure 3 shows the formula for the t-test and how the numerator and denominator are related to the distributions.

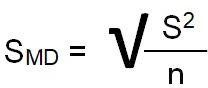

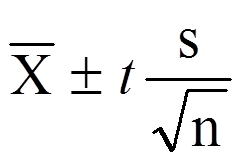

The top part of the formula is easy to compute – just find the difference between the means. The bottom part is called the standard error of the difference . To compute it, we take the variance for each group and divide it by the number of people in that group. We add these two values and then take their square root. The specific formula for the standard error of the difference between the means is:

Remember, that the variance is simply the square of the standard deviation .

The final formula for the t-test is:

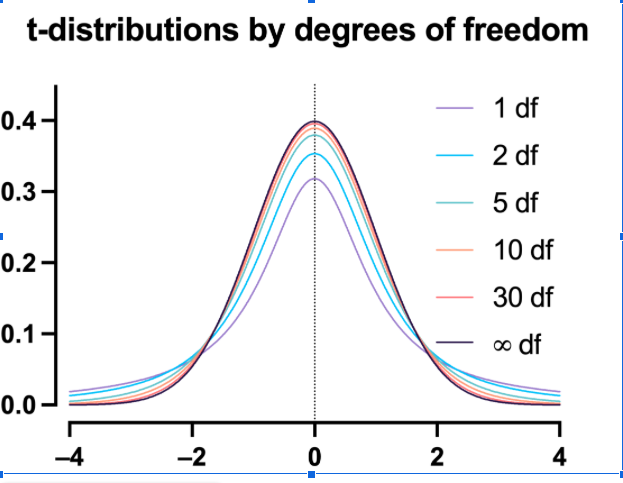

The t -value will be positive if the first mean is larger than the second and negative if it is smaller. Once you compute the t -value you have to look it up in a table of significance to test whether the ratio is large enough to say that the difference between the groups is not likely to have been a chance finding. To test the significance, you need to set a risk level (called the alpha level ). In most social research, the “rule of thumb” is to set the alpha level at .05 . This means that five times out of a hundred you would find a statistically significant difference between the means even if there was none (i.e. by “chance”). You also need to determine the degrees of freedom (df) for the test. In the t-test , the degrees of freedom is the sum of the persons in both groups minus 2 . Given the alpha level, the df, and the t -value, you can look the t -value up in a standard table of significance (available as an appendix in the back of most statistics texts) to determine whether the t -value is large enough to be significant. If it is, you can conclude that the difference between the means for the two groups is different (even given the variability). Fortunately, statistical computer programs routinely print the significance test results and save you the trouble of looking them up in a table.

The t-test, one-way Analysis of Variance (ANOVA) and a form of regression analysis are mathematically equivalent (see the statistical analysis of the posttest-only randomized experimental design ) and would yield identical results.

Cookie Consent

Conjointly uses essential cookies to make our site work. We also use additional cookies in order to understand the usage of the site, gather audience analytics, and for remarketing purposes.

For more information on Conjointly's use of cookies, please read our Cookie Policy .

Which one are you?

I am new to conjointly, i am already using conjointly.

An open portfolio of interoperable, industry leading products

The Dotmatics digital science platform provides the first true end-to-end solution for scientific R&D, combining an enterprise data platform with the most widely used applications for data analysis, biologics, flow cytometry, chemicals innovation, and more.

Statistical analysis and graphing software for scientists

Bioinformatics, cloning, and antibody discovery software

Plan, visualize, & document core molecular biology procedures

Electronic Lab Notebook to organize, search and share data

Proteomics software for analysis of mass spec data

Modern cytometry analysis platform

Analysis, statistics, graphing and reporting of flow cytometry data

Software to optimize designs of clinical trials

The Ultimate Guide to T Tests

Get all of your t test questions answered here

The ultimate guide to t tests

The t test is one of the simplest statistical techniques that is used to evaluate whether there is a statistical difference between the means from up to two different samples. The t test is especially useful when you have a small number of sample observations (under 30 or so), and you want to make conclusions about the larger population.

The characteristics of the data dictate the appropriate type of t test to run. All t tests are used as standalone analyses for very simple experiments and research questions as well as to perform individual tests within more complicated statistical models such as linear regression. In this guide, we’ll lay out everything you need to know about t tests, including providing a simple workflow to determine what t test is appropriate for your particular data or if you’d be better suited using a different model.

What is a t test?

A t test is a statistical technique used to quantify the difference between the mean (average value) of a variable from up to two samples (datasets). The variable must be numeric. Some examples are height, gross income, and amount of weight lost on a particular diet.

A t test tells you if the difference you observe is “surprising” based on the expected difference. They use t-distributions to evaluate the expected variability. When you have a reasonable-sized sample (over 30 or so observations), the t test can still be used, but other tests that use the normal distribution (the z test) can be used in its place.

Sometimes t tests are called “Student’s” t tests, which is simply a reference to their unusual history.

It got its name because a brewer from the Guinness Brewery, William Gosset , published about the method under the pseudonym "Student". He wanted to get information out of very small sample sizes (often 3-5) because it took so much effort to brew each keg for his samples.

When should I use a t test?

A t test is appropriate to use when you’ve collected a small, random sample from some statistical “population” and want to compare the mean from your sample to another value. The value for comparison could be a fixed value (e.g., 10) or the mean of a second sample.

For example, if your variable of interest is the average height of sixth graders in your region, then you might measure the height of 25 or 30 randomly-selected sixth graders. A t test could be used to answer questions such as, “Is the average height greater than four feet?”

How does a t test work?

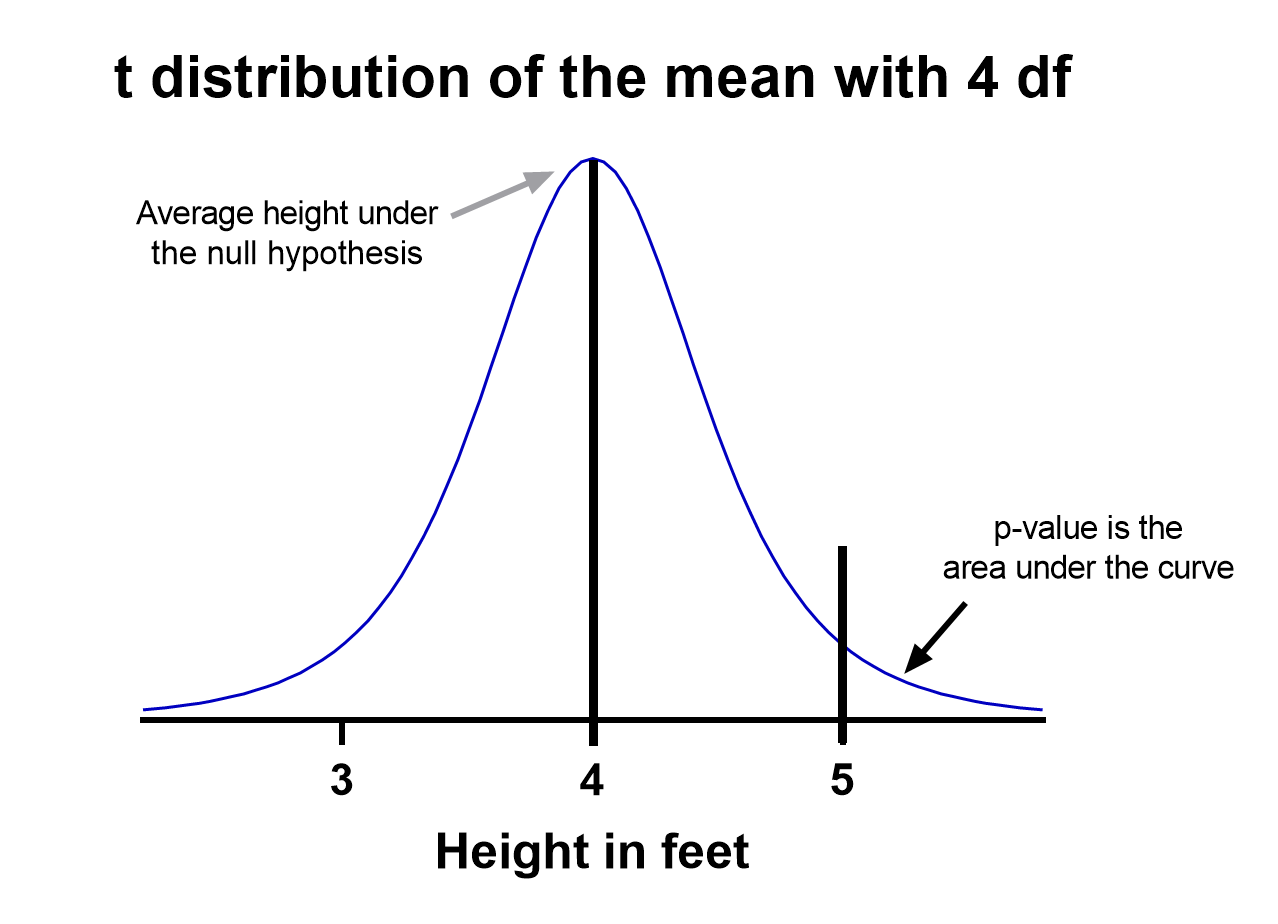

Based on your experiment, t tests make enough assumptions about your experiment to calculate an expected variability, and then they use that to determine if the observed data is statistically significant. To do this, t tests rely on an assumed “null hypothesis.” With the above example, the null hypothesis is that the average height is less than or equal to four feet.

Say that we measure the height of 5 randomly selected sixth graders and the average height is five feet. Does that mean that the “true” average height of all sixth graders is greater than four feet or did we randomly happen to measure taller than average students?

To evaluate this, we need a distribution that shows every possible average value resulting from a sample of five individuals in a population where the true mean is four. That may seem impossible to do, which is why there are particular assumptions that need to be made to perform a t test.

With those assumptions, then all that’s needed to determine the “sampling distribution of the mean” is the sample size (5 students in this case) and standard deviation of the data (let’s say it’s 1 foot).

That’s enough to create a graphic of the distribution of the mean, which is:

Notice the vertical line at x = 5, which was our sample mean. We (use software to) calculate the area to the right of the vertical line, which gives us the P value (0.09 in this case). Note that because our research question was asking if the average student is greater than four feet, the distribution is centered at four. Since we’re only interested in knowing if the average is greater than four feet, we use a one-tailed test in this case.

Using the standard confidence level of 0.05 with this example, we don’t have evidence that the true average height of sixth graders is taller than 4 feet.

What are the assumptions for t tests?

- One variable of interest : This is not correlation or regression, where you are interested in the relationship between multiple variables. With a t test, you can have different samples, but they are all measuring the same variable (e.g., height).

- Numeric data: You are dealing with a list of measurements that can be averaged. This means you aren’t just counting occurrences in various categories (e.g., eye color or political affiliation).

- Two groups or less: If you have more than two samples of data, a t test is the wrong technique. You most likely need to try ANOVA.

- Random sample : You need a random sample from your statistical “population of interest” in order to draw valid conclusions about the larger population. If your population is so small that you can measure everything, then you have a “census” and don’t need statistics. This is because you don’t need to estimate the truth, since you have measured the truth without variability.

- Normally Distributed : The smaller your sample size, the more important it is that your data come from a normal, Gaussian distribution bell curve. If you have reason to believe that your data are not normally distributed, consider nonparametric t test alternatives . This isn’t necessary for larger samples (usually 25 or 30 unless the data is heavily skewed). The reason is that the Central Limit Theorem applies in this case, which says that even if the distribution of your data is not normal, the distribution of the mean of your data is, so you can use a z-test rather than a t test.

How do I know which t test to use?

There are many types of t tests to choose from, but you don’t necessarily have to understand every detail behind each option.

You just need to be able to answer a few questions, which will lead you to pick the right t test. To that end, we put together this workflow for you to figure out which test is appropriate for your data.

Do you have one or two samples?

Are you comparing the means of two different samples, or comparing the mean from one sample to a fixed value? An example research question is, “Is the average height of my sample of sixth grade students greater than four feet?”

If you only have one sample of data, you can click here to skip to a one-sample t test example, otherwise your next step is to ask:

Are observations in the two samples matched up or related in some way?

This could be as before-and-after measurements of the same exact subjects, or perhaps your study split up “pairs” of subjects (who are technically different but share certain characteristics of interest) into the two samples. The same variable is measured in both cases.

If so, you are looking at some kind of paired samples t test . The linked section will help you dial in exactly which one in that family is best for you, either difference (most common) or ratio.

If you aren’t sure paired is right, ask yourself another question:

Are you comparing different observations in each of the two samples?

If the answer is yes, then you have an unpaired or independent samples t test. The two samples should measure the same variable (e.g., height), but are samples from two distinct groups (e.g., team A and team B).

The goal is to compare the means to see if the groups are significantly different. For example, “Is the average height of team A greater than team B?” Unlike paired, the only relationship between the groups in this case is that we measured the same variable for both. There are two versions of unpaired samples t tests (pooled and unpooled) depending on whether you assume the same variance for each sample.

Have you run the same experiment multiple times on the same subject/observational unit?

If so, then you have a nested t test (unless you have more than two sample groups). This is a trickier concept to understand. One example is if you are measuring how well Fertilizer A works against Fertilizer B. Let’s say you have 12 pots to grow plants in (6 pots for each fertilizer), and you grow 3 plants in each pot.

In this case you have 6 observational units for each fertilizer, with 3 subsamples from each pot. You would want to analyze this with a nested t test . The “nested” factor in this case is the pots. It’s important to note that we aren’t interested in estimating the variability within each pot, we just want to take it into account.

You might be tempted to run an unpaired samples t test here, but that assumes you have 6*3 = 18 replicates for each fertilizer. However, the three replicates within each pot are related, and an unpaired samples t test wouldn’t take that into account.

What if none of these sound like my experiment?

If you’re not seeing your research question above, note that t tests are very basic statistical tools. Many experiments require more sophisticated techniques to evaluate differences. If the variable of interest is a proportion (e.g., 10 of 100 manufactured products were defective), then you’d use z-tests. If you take before and after measurements and have more than one treatment (e.g., control vs a treatment diet), then you need ANOVA.

How do I perform a t test using software?

If you’re wondering how to do a t test, the easiest way is with statistical software such as Prism or an online t test calculator .

If you’re using software, then all you need to know is which t test is appropriate ( use the workflow here ) and understand how to interpret the output. To do that, you’ll also need to:

- Determine whether your test is one or two-tailed

- Choose the level of significance

Is my test one or two-tailed?

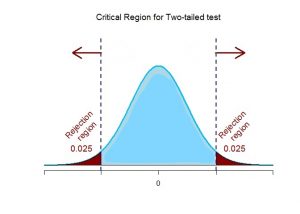

Whether or not you have a one- or two-tailed test depends on your research hypothesis. Choosing the appropriately tailed test is very important and requires integrity from the researcher. This is because you have more “power” with one-tailed tests, meaning that you can detect a statistically significant difference more easily. Unless you have written out your research hypothesis as one directional before you run your experiment, you should use a two-tailed test.

Two-tailed tests

Two-tailed tests are the most common, and they are applicable when your research question is simply asking, “is there a difference?”

One-tailed tests

Contrast that with one-tailed tests, where the research questions are directional, meaning that either the question is, “is it greater than ” or the question is, “is it less than ”. These tests can only detect a difference in one direction.

Choosing the level of significance

All t tests estimate whether a mean of a population is different than some other value, and with all estimates come some variability, or what statisticians call “error.” Before analyzing your data, you want to choose a level of significance, usually denoted by the Greek letter alpha, 𝛼. The scientific standard is setting alpha to be 0.05.

An alpha of 0.05 results in 95% confidence intervals, and determines the cutoff for when P values are considered statistically significant.

One sample t test

If you only have one sample of a list of numbers, you are doing a one-sample t test. All you are interested in doing is comparing the mean from this group with some known value to test if there is evidence, that it is significantly different from that standard. Use our free one-sample t test calculator for this.

A one sample t test example research question is, “Is the average fifth grader taller than four feet?”

It is the simplest version of a t test, and has all sorts of applications within hypothesis testing. Sometimes the “known value” is called the “null value”. While the null value in t tests is often 0, it could be any value. The name comes from being the value which exactly represents the null hypothesis, where no significant difference exists.

Any time you know the exact number you are trying to compare your sample of data against, this could work well. And of course: it can be either one or two-tailed.

One sample t test formula

Statistical software handles this for you, but if you want the details, the formula for a one sample t test is:

- M: Calculated mean of your sample

- μ: Hypothetical mean you are testing against

- s: The standard deviation of your sample

- n: The number of observations in your sample.

In a one-sample t test, calculating degrees of freedom is simple: one less than the number of objects in your dataset (you’ll see it written as n-1 ).

Example of a one sample t test

For our example within Prism, we have a dataset of 12 values from an experiment labeled “% of control”. Perhaps these are heights of a sample of plants that have been treated with a new fertilizer. A value of 100 represents the industry-standard control height. Likewise, 123 represents a plant with a height 123% that of the control (that is, 23% larger).

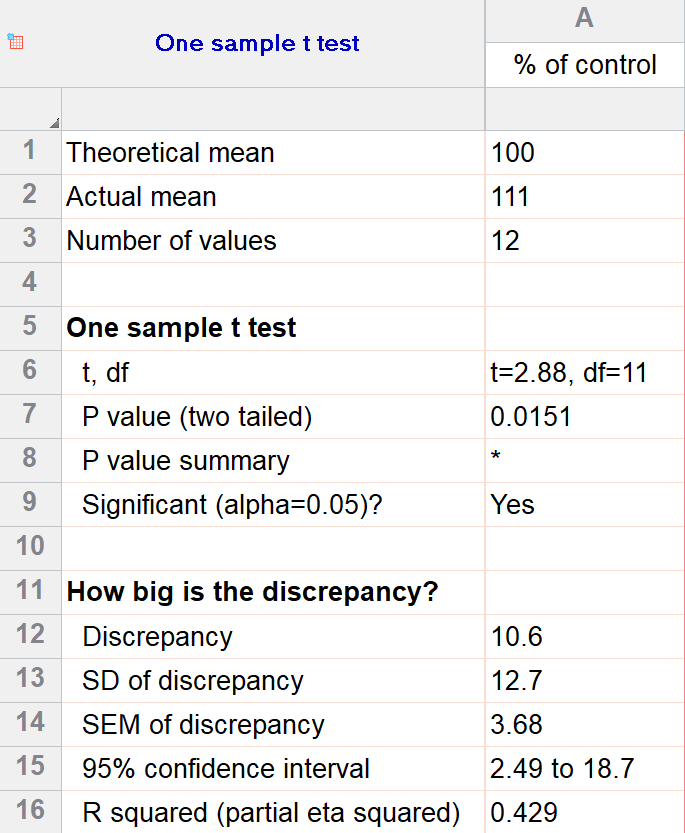

We’ll perform a two-tailed, one-sample t test to see if plants are shorter or taller on average with the fertilizer. We will use a significance threshold of 0.05. Here is the output:

You can see in the output that the actual sample mean was 111. Is that different enough from the industry standard (100) to conclude that there is a statistical difference?

The quick answer is yes, there’s strong evidence that the height of the plants with the fertilizer is greater than the industry standard (p=0.015). The nice thing about using software is that it handles some of the trickier steps for you. In this case, it calculates your test statistic (t=2.88), determines the appropriate degrees of freedom (11), and outputs a P value.

More informative than the P value is the confidence interval of the difference, which is 2.49 to 18.7. The confidence interval tells us that, based on our data, we are confident that the true difference between our sample and the baseline value of 100 is somewhere between 2.49 and 18.7. As long as the difference is statistically significant, the interval will not contain zero.

You can follow these tips for interpreting your own one-sample test.

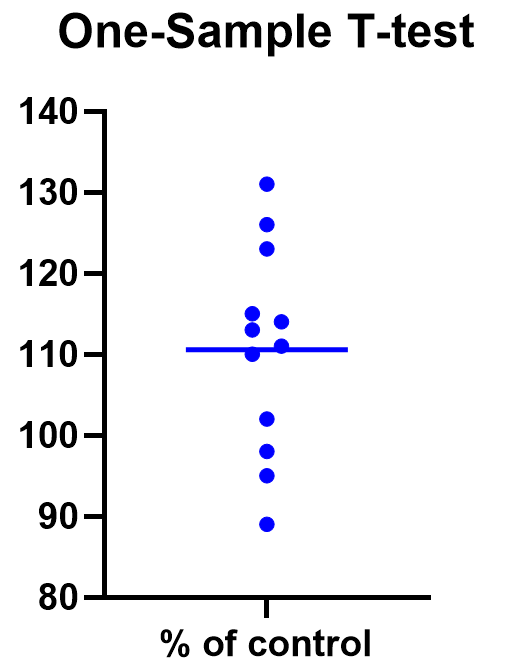

Graphing a one-sample t test

For some techniques (like regression), graphing the data is a very helpful part of the analysis. For t tests, making a chart of your data is still useful to spot any strange patterns or outliers, but the small sample size means you may already be familiar with any strange things in your data.

Here we have a simple plot of the data points, perhaps with a mark for the average. We’ve made this as an example, but the truth is that graphing is usually more visually telling for two-sample t tests than for just one sample.

Two sample t tests

There are several kinds of two sample t tests, with the two main categories being paired and unpaired (independent) samples.

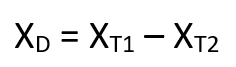

Paired samples t test

In a paired samples t test, also called dependent samples t test, there are two samples of data, and each observation in one sample is “paired” with an observation in the second sample. The most common example is when measurements are taken on each subject before and after a treatment. A paired t test example research question is, “Is there a statistical difference between the average red blood cell counts before and after a treatment?”

Having two samples that are closely related simplifies the analysis. Statistical software, such as this paired t test calculator , will simply take a difference between the two values, and then compare that difference to 0.

In some (rare) situations, taking a difference between the pairs violates the assumptions of a t test, because the average difference changes based on the size of the before value (e.g., there’s a larger difference between before and after when there were more to start with). In this case, instead of using a difference test, use a ratio of the before and after values, which is referred to as ratio t tests .

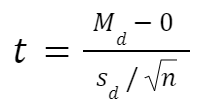

Paired t test formula

The formula for paired samples t test is:

- Md: Mean difference between the samples

- sd: The standard deviation of the differences

- n: The number of differences

Degrees of freedom are the same as before. If you’re studying for an exam, you can remember that the degrees of freedom are still n-1 (not n-2) because we are converting the data into a single column of differences rather than considering the two groups independently.

Also note that the null value here is simply 0. There is no real reason to include “minus 0” in an equation other than to illustrate that we are still doing a hypothesis test. After you take the difference between the two means, you are comparing that difference to 0.

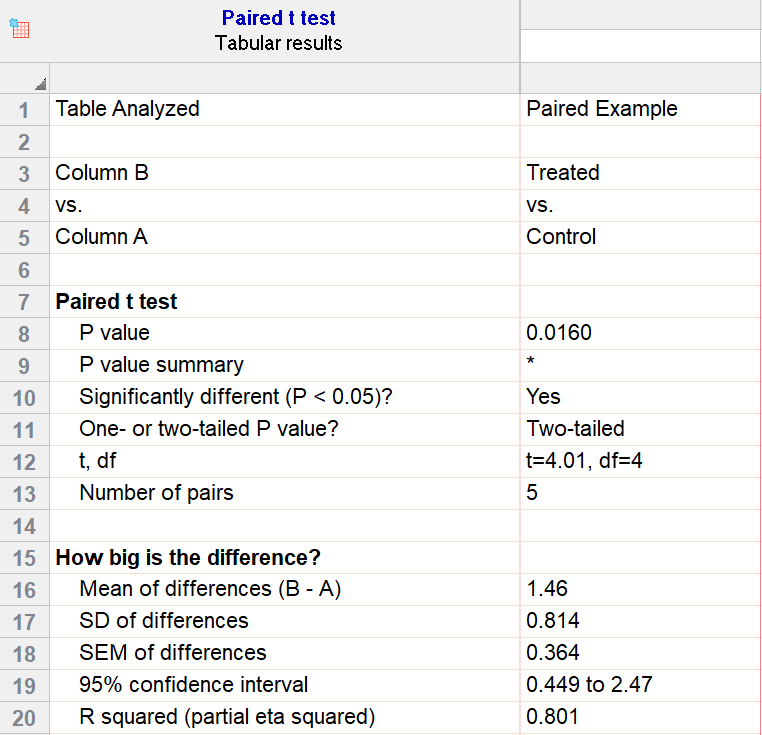

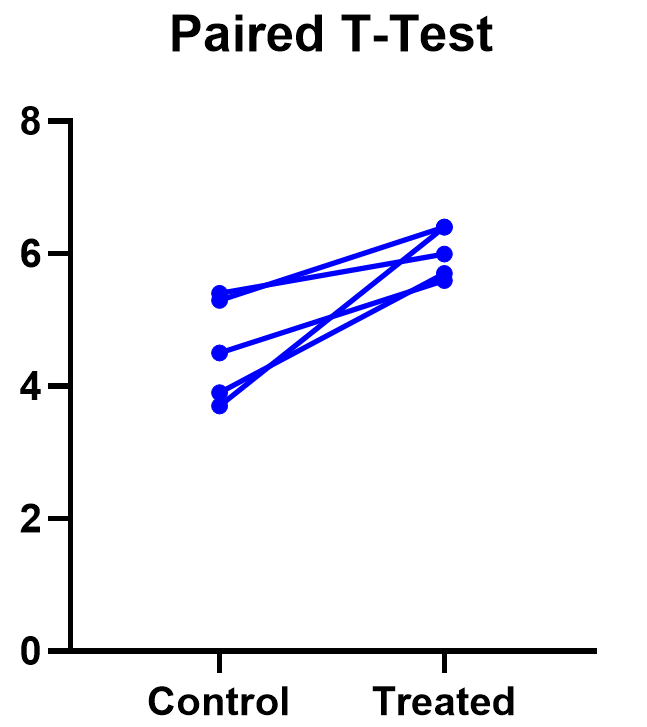

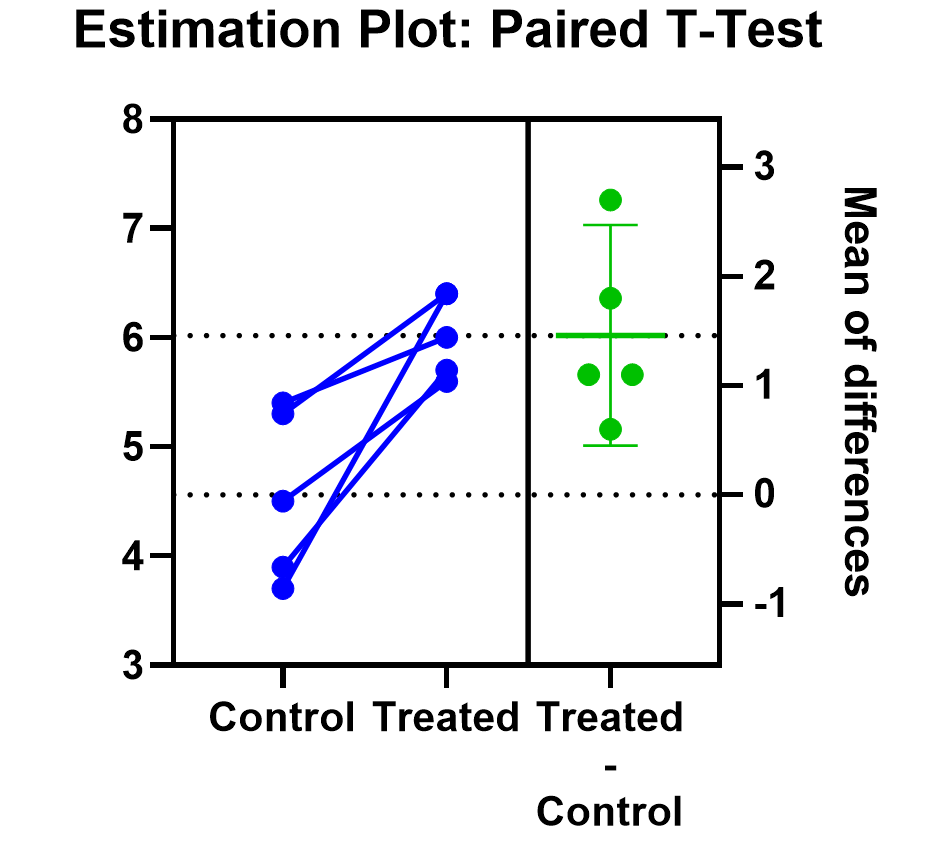

For our example data, we have five test subjects and have taken two measurements from each: before (“control”) and after a treatment (“treated”). If we set alpha = 0.05 and perform a two-tailed test, we observe a statistically significant difference between the treated and control group (p=0.0160, t=4.01, df = 4). We are 95% confident that the true mean difference between the treated and control group is between 0.449 and 2.47.

Graphing a paired t test

The significant result of the P value suggests evidence that the treatment had some effect, and we can also look at this graphically. The lines that connect the observations can help us spot a pattern, if it exists. In this case the lines show that all observations increased after treatment. While not all graphics are this straightforward, here it is very consistent with the outcome of the t test.

Prism’s estimation plot is even more helpful because it shows both the data (like above) and the confidence interval for the difference between means. You can easily see the evidence of significance since the confidence interval on the right does not contain zero.

Here are some more graphing tips for paired t tests .

Unpaired samples t test

Unpaired samples t test, also called independent samples t test, is appropriate when you have two sample groups that aren’t correlated with one another. A pharma example is testing a treatment group against a control group of different subjects. Compare that with a paired sample, which might be recording the same subjects before and after a treatment.

With unpaired t tests, in addition to choosing your level of significance and a one or two tailed test, you need to determine whether or not to assume that the variances between the groups are the same or not. If you assume equal variances, then you can “pool” the calculation of the standard error between the two samples. Otherwise, the standard choice is Welch’s t test which corrects for unequal variances. This choice affects the calculation of the test statistic and the power of the test, which is the test’s sensitivity to detect statistical significance.

It’s best to choose whether or not you’ll use a pooled or unpooled (Welch’s) standard error before running your experiment, because the standard statistical test is notoriously problematic. See more details about unequal variances here .

As long as you’re using statistical software, such as this two-sample t test calculator , it’s just as easy to calculate a test statistic whether or not you assume that the variances of your two samples are the same. If you’re doing it by hand, however, the calculations get more complicated with unequal variances.

Unpaired (independent) samples t test formula

The general two-sample t test formula is:

- M1 and M2: Two means you are comparing, one from each dataset

- SE : The combined standard error of the two samples (calculated using pooled or unpooled standard error)

The denominator (standard error) calculation can be complicated, as can the degrees of freedom. If the groups are not balanced (the same number of observations in each), you will need to account for both when determining n for the test as a whole.

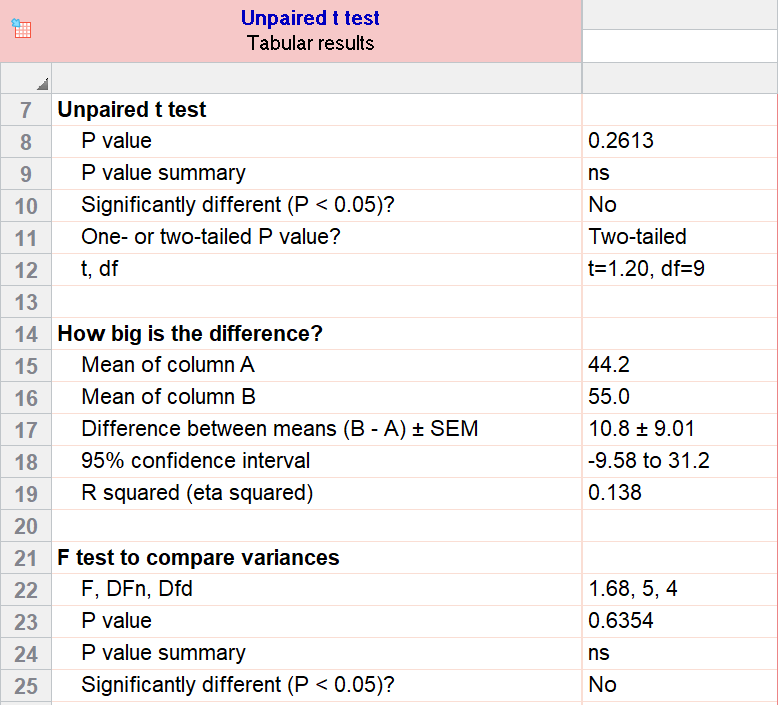

As an example for this family, we conduct a paired samples t test assuming equal variances (pooled). Based on our research hypothesis, we’ll conduct a two-tailed test, and use alpha=0.05 for our level of significance. Our samples were unbalanced, with two samples of 6 and 5 observations respectively.

The P value (p=0.261, t = 1.20, df = 9) is higher than our threshold of 0.05. We have not found sufficient evidence to suggest a significant difference. You can see the confidence interval of the difference of the means is -9.58 to 31.2.

Note that the F-test result shows that the variances of the two groups are not significantly different from each other.

Graphing an unpaired samples t test

For an unpaired samples t test, graphing the data can quickly help you get a handle on the two groups and how similar or different they are. Like the paired example, this helps confirm the evidence (or lack thereof) that is found by doing the t test itself.

Below you can see that the observed mean for females is higher than that for males. But because of the variability in the data, we can’t tell if the means are actually different or if the difference is just by chance.

Nonparametric alternatives for t tests

If your data comes from a normal distribution (or something close enough to a normal distribution), then a t test is valid. If that assumption is violated, you can use nonparametric alternatives.

T tests evaluate whether the mean is different from another value, whereas nonparametric alternatives compare either the median or the rank. Medians are well-known to be much more robust to outliers than the mean.

The downside to nonparametric tests is that they don’t have as much statistical power, meaning a larger difference is required in order to determine that it’s statistically significant.

Wilcoxon signed-rank test

The Wilcoxon signed-rank test is the nonparametric cousin to the one-sample t test. This compares a sample median to a hypothetical median value. It is sometimes erroneously even called the Wilcoxon t test (even though it calculates a “W” statistic).

And if you have two related samples, you should use the Wilcoxon matched pairs test instead. The two versions of Wilcoxon are different, and the matched pairs version is specifically for comparing the median difference for paired samples.

Mann-Whitney and Kolmogorov-Smirnov tests

For unpaired (independent) samples, there are multiple options for nonparametric testing. Mann-Whitney is more popular and compares the mean ranks (the ordering of values from smallest to largest) of the two samples. Mann-Whitney is often misrepresented as a comparison of medians, but that’s not always the case. Kolmogorov-Smirnov tests if the overall distributions differ between the two samples.

More t test FAQs

What is the formula for a t test.

The exact formula depends on which type of t test you are running, although there is a basic structure that all t tests have in common. All t test statistics will have the form:

- t : The t test statistic you calculate for your test

- Mean1 and Mean2: Two means you are comparing, at least 1 from your own dataset

- Standard Error of the Mean : The standard error of the mean , also called the standard deviation of the mean, which takes into account the variance and size of your dataset

The exact formula for any t test can be slightly different, particularly the calculation of the standard error. Not only does it matter whether one or two samples are being compared, the relationship between the samples can make a difference too.

What is a t-distribution?

A t-distribution is similar to a normal distribution. It’s a bell-shaped curve, but compared to a normal it has fatter tails, which means that it’s more common to observe extremes. T-distributions are identified by the number of degrees of freedom. The higher the number, the closer the t-distribution gets to a normal distribution. After about 30 degrees of freedom, a t and a standard normal are practically the same.

What are degrees of freedom?

Degrees of freedom are a measure of how large your dataset is. They aren’t exactly the number of observations, because they also take into account the number of parameters (e.g., mean, variance) that you have estimated.

What is the difference between paired vs unpaired t tests?

Both paired and unpaired t tests involve two sample groups of data. With a paired t test, the values in each group are related (usually they are before and after values measured on the same test subject). In contrast, with unpaired t tests, the observed values aren’t related between groups. An unpaired, or independent t test, example is comparing the average height of children at school A vs school B.

When do I use a z-test versus a t test?

Z-tests, which compare data using a normal distribution rather than a t-distribution, are primarily used for two situations. The first is when you’re evaluating proportions (number of failures on an assembly line). The second is when your sample size is large enough (usually around 30) that you can use a normal approximation to evaluate the means.

When should I use ANOVA instead of a t test?

Use ANOVA if you have more than two group means to compare.

What are the differences between t test vs chi square?

Chi square tests are used to evaluate contingency tables , which record a count of the number of subjects that fall into particular categories (e.g., truck, SUV, car). t tests compare the mean(s) of a variable of interest (e.g., height, weight).

What are P values?

P values are the probability that you would get data as or more extreme than the observed data given that the null hypothesis is true. It’s a mouthful, and there are a lot of issues to be aware of with P values.

What are t test critical values?

Critical values are a classical form (they aren’t used directly with modern computing) of determining if a statistical test is significant or not. Historically you could calculate your test statistic from your data, and then use a t-table to look up the cutoff value (critical value) that represented a “significant” result. You would then compare your observed statistic against the critical value.

How do I calculate degrees of freedom for my t test?

In most practical usage, degrees of freedom are the number of observations you have minus the number of parameters you are trying to estimate. The calculation isn’t always straightforward and is approximated for some t tests.

Statistical software calculates degrees of freedom automatically as part of the analysis, so understanding them in more detail isn’t needed beyond assuaging any curiosity.

Perform your own t test

Are you ready to calculate your own t test? Start your 30 day free trial of Prism and get access to:

- A step by step guide on how to perform a t test

- Sample data to save you time

- More tips on how Prism can help your research

With Prism, in a matter of minutes you learn how to go from entering data to performing statistical analyses and generating high-quality graphs.

JMP | Statistical Discovery.™ From SAS.

Statistics Knowledge Portal

A free online introduction to statistics

What is a t- test?

A t -test (also known as Student's t -test) is a tool for evaluating the means of one or two populations using hypothesis testing. A t-test may be used to evaluate whether a single group differs from a known value (a one-sample t-test), whether two groups differ from each other (an independent two-sample t-test), or whether there is a significant difference in paired measurements (a paired, or dependent samples t-test).

How are t -tests used?

First, you define the hypothesis you are going to test and specify an acceptable risk of drawing a faulty conclusion. For example, when comparing two populations, you might hypothesize that their means are the same, and you decide on an acceptable probability of concluding that a difference exists when that is not true. Next, you calculate a test statistic from your data and compare it to a theoretical value from a t- distribution. Depending on the outcome, you either reject or fail to reject your null hypothesis.

What if I have more than two groups?

You cannot use a t -test. Use a multiple comparison method. Examples are analysis of variance ( ANOVA ) , Tukey-Kramer pairwise comparison, Dunnett's comparison to a control, and analysis of means (ANOM).

t -Test assumptions

While t -tests are relatively robust to deviations from assumptions, t -tests do assume that:

- The data are continuous.

- The sample data have been randomly sampled from a population.

- There is homogeneity of variance (i.e., the variability of the data in each group is similar).

- The distribution is approximately normal.

For two-sample t -tests, we must have independent samples. If the samples are not independent, then a paired t -test may be appropriate.

Types of t -tests

There are three t -tests to compare means: a one-sample t -test, a two-sample t -test and a paired t -test. The table below summarizes the characteristics of each and provides guidance on how to choose the correct test. Visit the individual pages for each type of t -test for examples along with details on assumptions and calculations.

| test | test | test | |

|---|---|---|---|

| Synonyms | Student’s -test | -test test -test -test -test | test -test |

| Number of variables | One | Two | Two |

| Type of variable | |||

| Purpose of test | Decide if the population mean is equal to a specific value or not | Decide if the population means for two different groups are equal or not | Decide if the difference between paired measurements for a population is zero or not |

| Example: test if... | Mean heart rate of a group of people is equal to 65 or not | Mean heart rates for two groups of people are the same or not | Mean difference in heart rate for a group of people before and after exercise is zero or not |

| Estimate of population mean | Sample average | Sample average for each group | Sample average of the differences in paired measurements |

| Population standard deviation | Unknown, use sample standard deviation | Unknown, use sample standard deviations for each group | Unknown, use sample standard deviation of differences in paired measurements |

| Degrees of freedom | Number of observations in sample minus 1, or: n–1 | Sum of observations in each sample minus 2, or: n + n – 2 | Number of paired observations in sample minus 1, or: n–1 |

The table above shows only the t -tests for population means. Another common t -test is for correlation coefficients . You use this t -test to decide if the correlation coefficient is significantly different from zero.

One-tailed vs. two-tailed tests

When you define the hypothesis, you also define whether you have a one-tailed or a two-tailed test. You should make this decision before collecting your data or doing any calculations. You make this decision for all three of the t -tests for means.

To explain, let’s use the one-sample t -test. Suppose we have a random sample of protein bars, and the label for the bars advertises 20 grams of protein per bar. The null hypothesis is that the unknown population mean is 20. Suppose we simply want to know if the data shows we have a different population mean. In this situation, our hypotheses are:

$ \mathrm H_o: \mu = 20 $

$ \mathrm H_a: \mu \neq 20 $

Here, we have a two-tailed test. We will use the data to see if the sample average differs sufficiently from 20 – either higher or lower – to conclude that the unknown population mean is different from 20.

Suppose instead that we want to know whether the advertising on the label is correct. Does the data support the idea that the unknown population mean is at least 20? Or not? In this situation, our hypotheses are:

$ \mathrm H_o: \mu >= 20 $

$ \mathrm H_a: \mu < 20 $

Here, we have a one-tailed test. We will use the data to see if the sample average is sufficiently less than 20 to reject the hypothesis that the unknown population mean is 20 or higher.

See the "tails for hypotheses tests" section on the t -distribution page for images that illustrate the concepts for one-tailed and two-tailed tests.

How to perform a t -test

For all of the t -tests involving means, you perform the same steps in analysis:

- Define your null ($ \mathrm H_o $) and alternative ($ \mathrm H_a $) hypotheses before collecting your data.

- Decide on the alpha value (or α value). This involves determining the risk you are willing to take of drawing the wrong conclusion. For example, suppose you set α=0.05 when comparing two independent groups. Here, you have decided on a 5% risk of concluding the unknown population means are different when they are not.

- Check the data for errors.

- Check the assumptions for the test.

- Perform the test and draw your conclusion. All t -tests for means involve calculating a test statistic. You compare the test statistic to a theoretical value from the t- distribution . The theoretical value involves both the α value and the degrees of freedom for your data. For more detail, visit the pages for one-sample t -test , two-sample t -test and paired t -test .

- Skip to main content

- Skip to primary sidebar

- Skip to footer

- QuestionPro

- Solutions Industries Gaming Automotive Sports and events Education Government Travel & Hospitality Financial Services Healthcare Cannabis Technology Use Case NPS+ Communities Audience Contactless surveys Mobile LivePolls Member Experience GDPR Positive People Science 360 Feedback Surveys

- Resources Blog eBooks Survey Templates Case Studies Training Help center

Home Market Research

T-Test: What It Is, Its Advantages + Steps to Perform It

Using statistical analyses is crucial for making sense of research data, and the t-test is a key tool in this process. The test helps researchers find important differences between groups, whether they’re studying how different teaching methods affect student performance or evaluating the effectiveness of a new medical treatment.

This statistical test comes in two forms: independent and paired. It helps determine if differences in averages are likely because of real effects or just random chance. William Sealy Gosset, a British statistician, created it in 1908 while working at the Guinness Brewery. He needed a way to analyze small samples of data from beer production.

Nowadays, the t-test, also called Student’s t-test, is widely used in scientific and market research.

In this article, we will learn how the t-test works, its different applications, and how it is used in practice.

What is a T-test?

The t-test is a statistical test that helps you compare the mean of two sets of data to see if they’re noticeably different.

Imagine you have two groups of students: one group took math classes, and the other group didn’t. You can use the t-test to find out if the group that took math classes scored significantly higher on a math test than the group that didn’t.

When you use the t-test, you will get a “t value,” which indicates whether the difference between the averages of the two groups is important or not.

What are the main uses of T-test?

The test is used in many fields, such as medical research, psychology, economics, and education. Here are some of the main uses of the t-test:

- Comparing Two Groups: This test is used to compare data from two groups. For example, it helps assess if there’s a difference in test scores between two sets of students.

- Assessing Treatment Effectiveness: The t-test can be used to evaluate if a treatment has a significant impact on a variable compared to a control group that did not receive the treatment.

- Analyzing Experiments: In scientific experiments, the t-test is frequently used to compare results between a treatment group and a control group.

- Exploring Gender Differences: Gender studies often use the t-test to compare mean differences between men and women concerning a particular variable.

- Survey Data Analysis: You can also use it for survey data analysis to compare the means of two groups of data, such as comparing average income between different groups of men and women.

Types of T-test?

The Student t-test is an important statistical tool used in various forms, each designed to address specific research details. It’s essential for you to understand these types to ensure accuracy in your analysis. The most common types are:

01. Two-sample T-test for Independent Data

This test helps you compare the averages of two separate groups that aren’t connected. It’s handy when the observations in one group have no relation to the observations in the other group.

For instance, you can use it to compare the average grades of students from two different courses.

02. Two-sample T-test for Related or Paired Data

It is also known as a related samples t-test or paired t-test. In this type, the difference looks at the average values of connected groups in a detailed way.

For example, you can examine measurements taken before and after treatment within your own group of people.

03. One-sample T-test

This test helps you check if the average of one group is different from a known or expected value, like the overall average. It’s used to see if the group’s average is significantly different from what you expected.

04. Equal or Heterogeneous Variance T-test

Student t-tests usually expect the variances of the two groups being compared to be the same. But sometimes, this might not be the case.

The equal variances t-test is used when we assume the variances are equal, and the heterogeneous variances t-test is used when we assume they are different between the two groups.

05. One-tailed or Two-tailed t-test

A Student’s t-test can be either one-tailed or two-tailed, based on the research question.

If you want to know if one average is significantly higher or lower than another, use a one-tailed test. On the other hand, a two-tailed test is used to find any significant difference between the averages, whether higher or lower.

What is the One-sample Student’s t-test?

The one-sample Student’s t-test is a method used to find out if the average of a sample is different from a known or assumed average of the entire population. It’s particularly handy when the population doesn’t have a normal distribution or when the sample size is small (less than 30).

This test involves calculating the t-statistic. You get this by dividing the difference between the sample mean, and the assumed or known means by the sample standard deviation and then dividing that by the square root of the sample size.

Here’s the key: If the calculated t statistic is larger than the critical value of t, you find in a table specific to the Student’s t distribution (based on the chosen significance level and degrees of freedom, which is one less than the sample size), it means there’s enough evidence to say the sample mean is significantly different from the supposed or known mean.

In simpler terms, the one-sample Student’s t-test is a helpful tool for checking if a sample accurately represents a bigger population and for figuring out if the difference between the sample mean and the population mean is statistically significant.

Advantages of performing the T-test

The Student t-test is a handy statistical tool with several advantages for different research situations. Some of the main advantages are:

- Works with Different Sample Sizes: Unlike other tests, the t-test is flexible and can be used with both small and large samples.

- Doesn’t Require a Perfectly Normal Distribution: The t-test is robust, meaning it can handle situations where the data doesn’t perfectly follow a normal distribution, especially when the sample size is large.

- Easy to Calculate: This test is relatively simple and straightforward to calculate. This simplicity makes it practical and applicable in various research scenarios.

- Versatile Application: The test finds use in diverse fields like medical research, education studies, market research, and engineering, showcasing its wide-ranging applicability.

- Detects Statistical Significance: One of its main purposes is to determine whether the observed difference between the sample mean and the known or assumed population mean is statistically significant or not.

Steps to perform a Student t test

Performing a Student t-test is a careful and detailed process that requires close attention at every step. Let’s take a thorough look at the various aspects involved:

Step 1: Define the Null and Alternative Hypothesis

Start by creating a straightforward null hypothesis that says there’s no big difference between the averages. Then, make an alternative hypothesis that suggests there is a noticeable difference.

This first step is crucial because it sets up the hypotheses that will steer the whole analysis. It gives a clear direction for the investigation.

Step 2: Select the Appropriate Type of T-test

Decide whether to use an independent samples t-test or a paired samples t-test based on how the data sets are related.

The type of data you have will guide your decision. If you’re comparing data from separate groups, go for the independent samples t-test. If you’re working with related observations, choose the paired samples t-test.

Step 3: Calculate Mean, Standard Deviation, and Sample Size

Collect important information about each group, such as the average (mean), how spread out the values are (standard deviation), and the number of observations in each group (sample size).

These numbers will help you understand the typical value, the range of values, and how many data points are in each group. They are important for doing further calculations.

Step 4: Calculate the t-statistic

Use the right formula to calculate the t-statistic, taking into account the average differences, the spread of data, and the size of the samples.

This calculation helps measure how much the groups differ, combining information about the average and how spread out the data is for a detailed evaluation.

Step 5: Determine the Critical Value of t

Look at a Student t distribution table to find the important t value for the selected significance level, usually 0.05.

The critical t value helps decide whether to reject the null hypothesis in statistical analysis. It’s an important factor in making decisions based on statistics.

Step 6: Compare Calculated and Critical t Values

Check if the calculated t value is higher than the critical value from the distribution table.

This comparison is really important. If the calculated t value is greater than the critical threshold, it means you can reject the null hypothesis, showing there’s a significant difference between the means.

Step 7: Interpret Results and Conclude

Combine the results to make sense of them and understand the importance of the differences you observed.

In this last stage, turn the numbers and data into practical insights that have real-world meaning. This helps answer the research question and supports making well-informed decisions.

Conducting a t-test can be a bit tricky, especially when you need to think about whether your data is normal and if the variances are similar. If you find yourself dealing with these issues, it might be helpful to use statistical software or get help from a statistician.

Example of T-test

Here’s an example of using the Student t test in marketing research:

Let’s say a company wants to find out if there’s a big difference in customer satisfaction with two versions of its product. To do this, they randomly picked two groups, each with 50 customers, and asked them to rate their satisfaction on a scale of 1 to 10.

The first group tries version A, and the second group tries both version A and version B. The data they get looks like this:

| Cluster | Half | Standard deviation |

| TO | 7.5 | 1.5 |

| b | 8.2 | 1.3 |

To check if there’s a notable difference between the two product versions, you can use a test called the Student’s t-test for independent samples. The results of the test show a t-value of -2.69 and a p-value of 0.009.

Comparing this p-value to a 5% significance level, you can conclude that there’s a significant difference in customer satisfaction between the two versions. Simply put, there’s statistical evidence supporting the idea that customers prefer version B over version A.

This information is valuable for the company in deciding how to produce and market the product. It suggests that version B is likely more appealing to customers and, therefore, could be more profitable in the long term.

What is the difference between the t-test and ANOVA?

The t-test and ANOVA (Analysis of Variance) are tools used to compare averages in different sets of data. However, there are some key differences between them:

- T-test: Used when comparing the average of two sets of data.

- ANOVA: Used when comparing the average of three or more sets of data.

- T-test: Works with continuous numerical variables and independent data.

- ANOVA: Works with continuous numerical variables and can handle both dependent and independent data.

- T-test: Gives a t value, showing how significant the mean difference is between two groups.

- ANOVA: Provides an F value, indicating the significance of mean differences among three or more groups.

- T-test: Conducts a univariate analysis, examining one independent variable at a time.

- ANOVA: Conducts a multivariate analysis, allowing the examination of several independent factors simultaneously.

In summary, the Student’s t-test is a valuable and flexible statistical technique that allows the mean of a sample to be compared with a hypothetical or known population mean, with a series of advantages that make it useful in various research contexts.

It is especially useful when working with small samples because it is based on the Student’s t distribution, which takes into account the additional uncertainty that occurs when working with small samples.

Remember that with QuestionPro, you can collect the necessary data for your investigation. It also has real-time reports to analyze the information obtained and make the right decisions.

Start by exploring our free version or request a demo of our platform to see all the advanced features.

LEARN MORE FREE TRIAL

MORE LIKE THIS

The Item I Failed to Leave Behind — Tuesday CX Thoughts

Jun 25, 2024

Feedback Loop: What It Is, Types & How It Works?

Jun 21, 2024

QuestionPro Thrive: A Space to Visualize & Share the Future of Technology

Jun 18, 2024

Relationship NPS Fails to Understand Customer Experiences — Tuesday CX

Other categories.

- Academic Research

- Artificial Intelligence

- Assessments

- Brand Awareness

- Case Studies

- Communities

- Consumer Insights

- Customer effort score

- Customer Engagement

- Customer Experience

- Customer Loyalty

- Customer Research

- Customer Satisfaction

- Employee Benefits

- Employee Engagement

- Employee Retention

- Friday Five

- General Data Protection Regulation

- Insights Hub

- Life@QuestionPro

- Market Research

- Mobile diaries

- Mobile Surveys

- New Features

- Online Communities

- Question Types

- Questionnaire

- QuestionPro Products

- Release Notes

- Research Tools and Apps

- Revenue at Risk

- Survey Templates

- Training Tips

- Tuesday CX Thoughts (TCXT)

- Uncategorized

- Video Learning Series

- What’s Coming Up

- Workforce Intelligence

Our websites may use cookies to personalize and enhance your experience. By continuing without changing your cookie settings, you agree to this collection. For more information, please see our University Websites Privacy Notice .

Neag School of Education

Educational Research Basics by Del Siegle

An introduction to statistics usually covers t tests, anovas, and chi-square. for this course we will concentrate on t tests, although background information will be provided on anovas and chi-square., a powerpoint presentation on t tests has been created for your use..

The t test is one type of inferential statistics. It is used to determine whether there is a significant difference between the means of two groups. With all inferential statistics, we assume the dependent variable fits a normal distribution . When we assume a normal distribution exists, we can identify the probability of a particular outcome. We specify the level of probability (alpha level, level of significance, p ) we are willing to accept before we collect data ( p < .05 is a common value that is used). After we collect data we calculate a test statistic with a formula. We compare our test statistic with a critical value found on a table to see if our results fall within the acceptable level of probability. Modern computer programs calculate the test statistic for us and also provide the exact probability of obtaining that test statistic with the number of subjects we have.

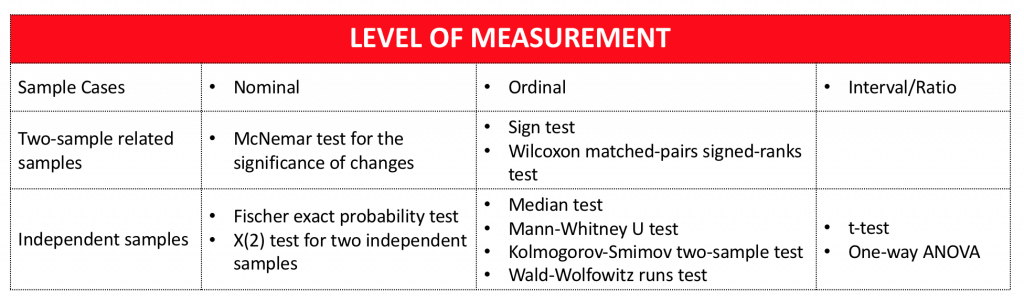

Student’s test ( t test) Notes

When the difference between two population averages is being investigated, a t test is used. In other words, a t test is used when we wish to compare two means (the scores must be measured on an interval or ratio measurement scale ). We would use a t test if we wished to compare the reading achievement of boys and girls. With a t test, we have one independent variable and one dependent variable. The independent variable (gender in this case) can only have two levels (male and female). The dependent variable would be reading achievement. If the independent had more than two levels, then we would use a one-way analysis of variance (ANOVA).

The test statistic that a t test produces is a t -value. Conceptually, t -values are an extension of z -scores. In a way, the t -value represents how many standard units the means of the two groups are apart.

With a t tes t, the researcher wants to state with some degree of confidence that the obtained difference between the means of the sample groups is too great to be a chance event and that some difference also exists in the population from which the sample was drawn. In other words, the difference that we might find between the boys’ and girls’ reading achievement in our sample might have occurred by chance, or it might exist in the population. If our t test produces a t -value that results in a probability of .01, we say that the likelihood of getting the difference we found by chance would be 1 in a 100 times. We could say that it is unlikely that our results occurred by chance and the difference we found in the sample probably exists in the populations from which it was drawn.

Five factors contribute to whether the difference between two groups’ means can be considered significant:

- How large is the difference between the means of the two groups? Other factors being equal, the greater the difference between the two means, the greater the likelihood that a statistically significant mean difference exists. If the means of the two groups are far apart, we can be fairly confident that there is a real difference between them.

- How much overlap is there between the groups? This is a function of the variation within the groups. Other factors being equal, the smaller the variances of the two groups under consideration, the greater the likelihood that a statistically significant mean difference exists. We can be more confident that two groups differ when the scores within each group are close together.

- How many subjects are in the two samples? The size of the sample is extremely important in determining the significance of the difference between means. With increased sample size, means tend to become more stable representations of group performance. If the difference we find remains constant as we collect more and more data, we become more confident that we can trust the difference we are finding.

- What alpha level is being used to test the mean difference (how confident do you want to be about your statement that there is a mean difference). A larger alpha level requires less difference between the means. It is much harder to find differences between groups when you are only willing to have your results occur by chance 1 out of a 100 times ( p < .01) as compared to 5 out of 100 times ( p < .05).

- Is a directional (one-tailed) or non-directional (two-tailed) hypothesis being tested? Other factors being equal, smaller mean differences result in statistical significance with a directional hypothesis. For our purposes we will use non-directional (two-tailed) hypotheses.

I have created an Excel spreadsheet that performs t-tests (with a PowerPoint presentation that explains how enter data and read it) and a PowerPoint presentation on t tests (you will probably find this useful).

Assumptions underlying the t test.

- The samples have been randomly drawn from their respective populations

- The scores in the population are normally distributed

- The scores in the populations have the same variance (s1=s2) Note: We use a different calculation for the standard error if they are not.

Three Types of t tests

- Pair-difference t test (a.k.a. t-test for dependent groups, correlated t test) df = n (number of pairs) -1

This is concerned with the difference between the average scores of a single sample of individuals who are assessed at two different times (such as before treatment and after treatment). It can also compare average scores of samples of individuals who are paired in some way (such as siblings, mothers, daughters, persons who are matched in terms of a particular characteristics).

- Equal Variance (Pooled-variance t-test) df=n (total of both groups) -2 Note: Used when both samples have the same number of subject or when s1=s2 (Levene or F-max tests have p > .05).

- Unequal Variance (Separate-variance t test) df dependents on a formula, but a rough estimate is one less than the smallest group Note: Used when the samples have different numbers of subjects and they have different variances — s1<>s2 (Levene or F-max tests have p < .05).

How do I decide which type of t test to use?

Note: The F-Max test can be substituted for the Levene test. The t test Excel spreadsheet that I created for our class uses the F -Max.

Type I and II errors

- Type I error — reject a null hypothesis that is really true (with tests of difference this means that you say there was a difference between the groups when there really was not a difference). The probability of making a Type I error is the alpha level you choose. If you set your probability (alpha level) at p < 05, then there is a 5% chance that you will make a Type I error. You can reduce the chance of making a Type I error by setting a smaller alpha level ( p < .01). The problem with this is that as you lower the chance of making a Type I error, you increase the chance of making a Type II error.

- Type II error — fail to reject a null hypothesis that is false (with tests of differences this means that you say there was no difference between the groups when there really was one)

Hypotheses (some ideas…)

- Non directional (two-tailed) Research Question: Is there a (statistically) significant difference between males and females with respect to math achievement? H0: There is no (statistically) significant difference between males and females with respect to math achievement. HA: There is a (statistically) significant difference between males and females with respect to math achievement.

- Directional (one-tailed) Research Question: Do males score significantly higher than females with respect to math achievement? H0: Males do not score significantly higher than females with respect to math achievement. HA: Males score significantly higher than females with respect to math achievement. The basic idea for calculating a t-test is to find the difference between the means of the two groups and divide it by the STANDARD ERROR (OF THE DIFFERENCE) — which is the standard deviation of the distribution of differences. Just for your information: A CONFIDENCE INTERVAL for a two-tailed t-test is calculated by multiplying the CRITICAL VALUE times the STANDARD ERROR and adding and subtracting that to and from the difference of the two means. EFFECT SIZE is used to calculate practical difference. If you have several thousand subjects, it is very easy to find a statistically significant difference. Whether that difference is practical or meaningful is another questions. This is where effect size becomes important. With studies involving group differences, effect size is the difference of the two means divided by the standard deviation of the control group (or the average standard deviation of both groups if you do not have a control group). Generally, effect size is only important if you have statistical significance. An effect size of .2 is considered small, .5 is considered medium, and .8 is considered large.

A bit of history… William Sealy Gosset (1905) first published a t-test. He worked at the Guiness Brewery in Dublin and published under the name Student. The test was called Studen t Test (later shortened to t test).

t tests can be easily computed with the Excel or SPSS computer application. I have created an Excel Spreadsheet that does a very nice job of calculating t values and other pertinent information.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Restor Dent Endod

- v.44(3); 2019 Aug

Statistical notes for clinical researchers: the independent samples t -test

Hae-young kim.

Department of Health Policy and Management, College of Health Science, and Department of Public Health Science, Graduate School, Korea University, Seoul, Korea.

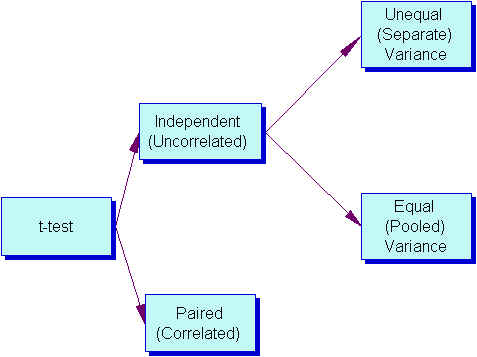

The t -test is frequently used in comparing 2 group means. The compared groups may be independent to each other such as men and women. Otherwise, compared data are correlated in a case such as comparison of blood pressure levels from the same person before and after medication ( Figure 1 ). In this section we will focus on independent t -test only. There are 2 kinds of independent t -test depending on whether 2 group variances can be assumed equal or not. The t -test is based on the inference using t -distribution.

T -DISTRIBUTION

The t -distribution was invented in 1908 by William Sealy Gosset, who was working for the Guinness brewery in Dublin, Ireland. As the Guinness brewery did not permit their employee's publishing the research results related to their work, Gosset published his findings by a pseudonym, “Student.” Therefore, the distribution he suggested was called as Student's t -distribution. The t -distribution is a distribution similar to the standard normal distribution, z -distribution, but has lower peak and higher tail compared to it ( Figure 2 ).

According to the sampling theory, when samples are drawn from a normal-distributed population, the distribution of sample means is expected to be a normal distribution. When we know the variance of population, σ 2 , we can define the distribution of sample means as a normal distribution and adopt z -distribution in statistical inference. However, in reality, we generally never know σ 2 , we use sample variance, s 2 , instead. Although the s 2 is the best estimator for σ 2 , the degree of accuracy of s 2 depends on the sample size. When the sample size is large enough ( e.g. , n = 300), we expect that the sample variance would be very similar to the population variance. However, when sample size is small, such as n = 10, we could guess that the accuracy of sample variance may be not that high. The t -distribution reflects this difference of uncertainty according to sample size. Therefore the shape of t -distribution changes by the degree of freedom (df), which is sample size minus one (n − 1) when one sample mean is tested.

The t -distribution appears to be a family of distribution of which shape varies according to its df ( Figure 2 ). When df is smaller, the t -distribution has lower peak and higher tail compared to those with higher df. The shape of t -distribution approaches to z -distribution as df increases. When df gets large enough, e.g. , n = 300, t -distribution is almost identical with z -distribution. For the inferences of means using small samples, it is necessary to apply t -distribution, while similar inference can be obtain by either t -distribution or z -distribution for a case with a large sample. For inference of 2 means, we generally use t -test based on t -distribution regardless of the sizes of sample because it is always safe, not only for a test with small df but also for that with large df.

INDEPENDENT SAMPLES T -TEST

To adopt z - or t -distribution for inference using small samples, a basic assumption is that the distribution of population is not significantly different from normal distribution. As seen in Appendix 1 , the normality assumption needs to be tested in advance. If normality assumption cannot be met and we have a small sample ( n < 25), then we are not permitted to use ‘parametric’ t -test. Instead, a non-parametric analysis such as Mann-Whitney U test should be selected.

For comparison of 2 independent group means, we can use a z -statistic to test the hypothesis of equal population means only if we know the population variances of 2 groups, σ 1 2 and σ 2 2 , as follows;

where X ̄ 1 and X ̄ 2 , σ 1 2 and σ 2 2 , and n 1 and n 2 are sample means, population variances, and the sizes of 2 groups.

Again, as we never know the population variances, we need to use sample variances as their estimates. There are 2 methods whether 2 population variances could be assumed equal or not. Under assumption of equal variances, the t -test devised by Gosset in 1908, Student's t -test, can be applied. The other version is Welch's t -test introduced in 1947, for the cases where the assumption of equal variances cannot be accepted because quite a big difference is observed between 2 sample variances.

1. Student's t -test

In Student's t -test, the population variances are assumed equal. Therefore, we need only one common variance estimate for 2 groups. The common variance estimate is calculated as a pooled variance, a weighted average of 2 sample variances as follows;

where s 1 2 and s 2 2 are sample variances.

The resulting t -test statistic is a form that both the population variances, σ 1 2 and σ 1 2 , are exchanged with a common variance estimate, s p 2 . The df is given as n 1 + n 2 − 2 for the t -test statistic.

In Appendix 1 , ‘(E-1) Leven's test for equality of variances’ shows that the null hypothesis of equal variances was accepted by the high p value, 0.334 (under heading of Sig.). In ‘(E-2) t -test for equality of means t -values’, the upper line shows the result of Student's t -test. The t -value and df are shown −3.357 and 18. We can get the same figures using the formulas Eq. 2 and Eq. 3, and descriptive statistics in Table 1 , as follows.

| Group | No. | Mean | Standard deviation | value |

|---|---|---|---|---|

| 1 | 10 | 10.28 | 0.5978 | 0.004 |

| 2 | 10 | 11.08 | 0.4590 |

The result of calculation is a little different from that by SPSS (IBM Corp., Armonk, NY, USA) of Appendix 1 , maybe because of rounding errors.

2. Welch's t -test

Actually there are a lot of cases where the equal variance cannot be assumed. Even if it is unlikely to assume equal variances, we still compare 2 independent group means by performing the Welch's t -test. Welch's t -test is more reliable when the 2 samples have unequal variances and/or unequal sample sizes. We need to maintain the assumption of normality.

Because the population variances are not equal, we have to estimate them separately by 2 sample variances, s 1 2 and s 2 2 . As the result, the form of t -test statistic is given as follows;

where ν is Satterthwaite degrees of freedom.

In Appendix 1 , ‘(E-1) Leven's test for equality of variances’ shows an equal variance can be successfully assumed ( p = 0.334). Therefore, the Welch's t -test is inappropriate for this data. Only for the purpose of exercise, we can try to interpret the results of Welch's t -test shown in the lower line in ‘(E-2) t -test for equality of means t -values’. The t -value and df are shown as −3.357 and 16.875.

We've confirmed nearly same results by calculation using the formula and by SPSS software.

The t -test is one of frequently used analysis methods for comparing 2 group means. However, sometimes we forget the underlying assumptions such as normality assumption or miss the meaning of equal variance assumption. Especially when we have a small sample, we need to check normality assumption first and make a decision between the parametric t -test and the nonparametric Mann-Whitney U test. Also, we need to assess the assumption of equal variances and select either Student's t -test or Welch's t -test.

Procedure of t -test analysis using IBM SPSS

The procedure of t -test analysis using IBM SPSS Statistics for Windows Version 23.0 (IBM Corp., Armonk, NY, USA) is as follows.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- What Is a Research Design | Types, Guide & Examples

What Is a Research Design | Types, Guide & Examples

Published on June 7, 2021 by Shona McCombes . Revised on November 20, 2023 by Pritha Bhandari.

A research design is a strategy for answering your research question using empirical data. Creating a research design means making decisions about:

- Your overall research objectives and approach

- Whether you’ll rely on primary research or secondary research

- Your sampling methods or criteria for selecting subjects

- Your data collection methods

- The procedures you’ll follow to collect data

- Your data analysis methods

A well-planned research design helps ensure that your methods match your research objectives and that you use the right kind of analysis for your data.

Table of contents

Step 1: consider your aims and approach, step 2: choose a type of research design, step 3: identify your population and sampling method, step 4: choose your data collection methods, step 5: plan your data collection procedures, step 6: decide on your data analysis strategies, other interesting articles, frequently asked questions about research design.

- Introduction

Before you can start designing your research, you should already have a clear idea of the research question you want to investigate.

There are many different ways you could go about answering this question. Your research design choices should be driven by your aims and priorities—start by thinking carefully about what you want to achieve.

The first choice you need to make is whether you’ll take a qualitative or quantitative approach.

| Qualitative approach | Quantitative approach |

|---|---|

| and describe frequencies, averages, and correlations about relationships between variables |

Qualitative research designs tend to be more flexible and inductive , allowing you to adjust your approach based on what you find throughout the research process.

Quantitative research designs tend to be more fixed and deductive , with variables and hypotheses clearly defined in advance of data collection.

It’s also possible to use a mixed-methods design that integrates aspects of both approaches. By combining qualitative and quantitative insights, you can gain a more complete picture of the problem you’re studying and strengthen the credibility of your conclusions.

Practical and ethical considerations when designing research

As well as scientific considerations, you need to think practically when designing your research. If your research involves people or animals, you also need to consider research ethics .

- How much time do you have to collect data and write up the research?

- Will you be able to gain access to the data you need (e.g., by travelling to a specific location or contacting specific people)?

- Do you have the necessary research skills (e.g., statistical analysis or interview techniques)?

- Will you need ethical approval ?

At each stage of the research design process, make sure that your choices are practically feasible.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

Within both qualitative and quantitative approaches, there are several types of research design to choose from. Each type provides a framework for the overall shape of your research.

Types of quantitative research designs

Quantitative designs can be split into four main types.

- Experimental and quasi-experimental designs allow you to test cause-and-effect relationships

- Descriptive and correlational designs allow you to measure variables and describe relationships between them.

| Type of design | Purpose and characteristics |

|---|---|

| Experimental | relationships effect on a |

| Quasi-experimental | ) |

| Correlational | |

| Descriptive |

With descriptive and correlational designs, you can get a clear picture of characteristics, trends and relationships as they exist in the real world. However, you can’t draw conclusions about cause and effect (because correlation doesn’t imply causation ).

Experiments are the strongest way to test cause-and-effect relationships without the risk of other variables influencing the results. However, their controlled conditions may not always reflect how things work in the real world. They’re often also more difficult and expensive to implement.

Types of qualitative research designs